The Cure for Artificial Amnesia: How Retrieval-Augmented Generation Taught AI to Remember

Imagine hiring the world's most brilliant analyst. They've read every book, absorbed every paper, and memorized every fact up to a certain date. But the moment you ask them about last week's quarterly report, your company's internal policy, or the name of a customer who called yesterday, they stare back at you blankly.

This was the fundamental frustration with early Large Language Models. Boundless intelligence, frozen in time. Retrieval-Augmented Generation — RAG, as it's known — is the elegant answer. It gives the analyst a filing cabinet.

What is RAG?

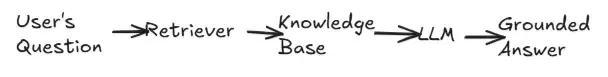

RAG is a design pattern that fuses two distinct systems: a retriever (a search engine that fetches relevant facts) and a generator (an LLM that weaves those facts into fluent language). Neither alone is sufficient. Together, they are formidable.

When a question arrives, it isn't passed directly to the LLM. Instead, it's first transformed into a semantic query and fired into a vector database full of your documents. The most relevant passages surface. Only then does the LLM receive the question — now enriched with real, specific, current context.

The pipeline:

Step by step:

1. The user asks a question.

2. The question is converted into a vector embedding.

3. A vector database is searched for the most semantically similar chunks.

4. The top chunks are injected into the LLM's context window.

5. The LLM generates an answer grounded in that retrieved evidence.

The Three Pillars

Chunking

Raw documents are sliced into bite-sized pieces — paragraphs, sentences, or sliding windows — small enough to be precise, large enough to carry meaning. Chunk size is one of the most consequential tuning decisions in any RAG system.

Embedding

Each chunk is converted into a dense numerical vector — a point in high-dimensional space where meaning determines proximity. Similar ideas cluster together. The sentence "the cat sat on the mat" and "a feline rested on a rug" end up near each other; "quarterly earnings report" ends up somewhere else entirely.

Retrieval

At query time, your question becomes a vector too. Cosine similarity or approximate nearest-neighbor algorithms (like HNSW) find the closest chunks instantly, surfacing what is most relevant to the question at hand.

Before and After the Filing Cabinet

| Without RAG | With RAG | |

|---|---|---|

| Mental model | The Sealed Library | The Living Archive |

| Knowledge | Frozen at training cutoff | Current and domain-specific |

| Failures | Confident hallucination | Transparent retrieval gaps |

| Citations | None | Traceable to source chunks |

Without RAG: A genius scholar who read every book in 2024 and was then locked in a room. Ask about today's news? A blank stare. Ask about your company's NDA clause? Silence. Their brilliance is real — but its reach is frozen at the moment the door closed. Worse, they will sometimes confabulate — filling gaps in memory with confident-sounding fiction.

With RAG: The same genius, now with a direct line to a live database. Every document, policy, article, transcript — instantly retrievable. They synthesize rather than memorize. Their answers are precise, current, and citable.

Where RAG Shines

- Finance - Policy querying, earnings analysis, compliance Q&A

- Healthcare - Drug lookups, clinical trial search, patient record Q&A

- Engineering - Codebase search, internal docs, debugging with history

- Education - Curriculum-grounded tutors, textbook-aware assistants

- Legal - Case law retrieval, contract review, precedent matching

- Commerce - Product discovery, support bots with live inventory

In each of these domains, the common thread is the same: an LLM that would otherwise hallucinate or go stale is anchored to a body of real, current, domain-specific knowledge.

The Honest Caveats

RAG has real failure modes worth understanding before you build.

Garbage In, Garbage Out

RAG is only as good as the knowledge base behind it. Stale, incomplete, or poorly chunked documents will produce confidently wrong answers. Investing in data quality is not optional.The Lost-in-the-Middle Problem

LLMs tend to pay more attention to information at the start and end of their context window. Chunks buried in the middle may get underweighted, even if they contain the most relevant information.Latency Overhead

Every query now involves embedding, vector search, and context assembly before the LLM even starts generating. In latency-critical applications, this pipeline cost matters and must be engineered around.Retrieval Failures

If the right chunk isn't retrieved — perhaps because the question is phrased unusually, or the document wasn't indexed correctly — the model will answer without the key information, often without knowing it. Evaluation and monitoring are essential.

The Takeaway

RAG isn't a trend — it's a fundamental shift in how we think about AI reliability. The industry is moving away from "trust the model's training" toward "trust the model's reasoning on retrieved evidence." One is faith. The other is engineering.

As vector databases mature, embedding models sharpen, and retrieval techniques grow more sophisticated — hybrid search, reranking, HyDE, multi-hop reasoning — RAG will only deepen its hold on production AI systems.

Build the filing cabinet. The analyst already knows what to do with it.

Sathmi Rajspaksha

Author

AI / ML Intern